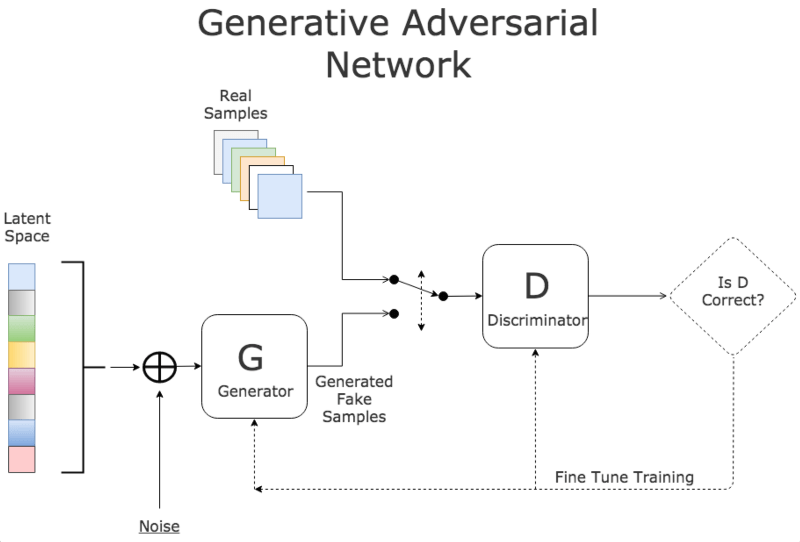

Generative models, like Generative Adversarial Networks (GAN), are a rapidly advancing area of research for computer science and machine intelligence nowadays. It’s hard to keep track of them all, not to mention the incredibly creative ways in which researchers have achieved and been working on.

The following figures demonstrate some results of the current works ( Images from https://blog.openai.com/generative-models/).

I think it is necessary to understand the basic pros and cons of it, and it may be very helpful to your own research. I have not fully reviewed the theory and papers, but after skimmed a few papers, I got the impression that the training process of GAN models is very tricky as well as any neural networks model. Thus, there must be a huge improving space for people to make.

Thanks to the internet! There are papers and codes everywhere and nobody will be left behind in these days unless he/she wants to. So working hard and to be a better man (or women or anything good for humanity), cheers!

Here are some papers and blogs that summarized the literature very well.

Here is my old group slide meeting note and download links.

Extra Source:

Continue reading “Sharing the opinion about Generative Adversarial Networks (GAN)”