I still need more time in reading and understanding MCMC and RBM. However, I’d like to share some learning materials for everyone. Hope you could find these helpful.

- An Introduction to MCMC for Machine Learning

- Reducing the Dimensionality of Data with Neural Networks

- A fast learning algorithm for deep belief nets

- A Practical Guide to Training Restricted Boltzmann Machines

- An Introduction to Restricted Boltzmann Machines

- Training restricted Boltzmann machines: An introduction

- Sparse deep belief net model for visual area V2

- Classification using Discriminative Restricted Boltzmann Machines

- Learning Invariant Representations with Local Transformations

- Unsupervised feature learning and deep learning a review and new perspectives

- Relevant literature

- To be continued …

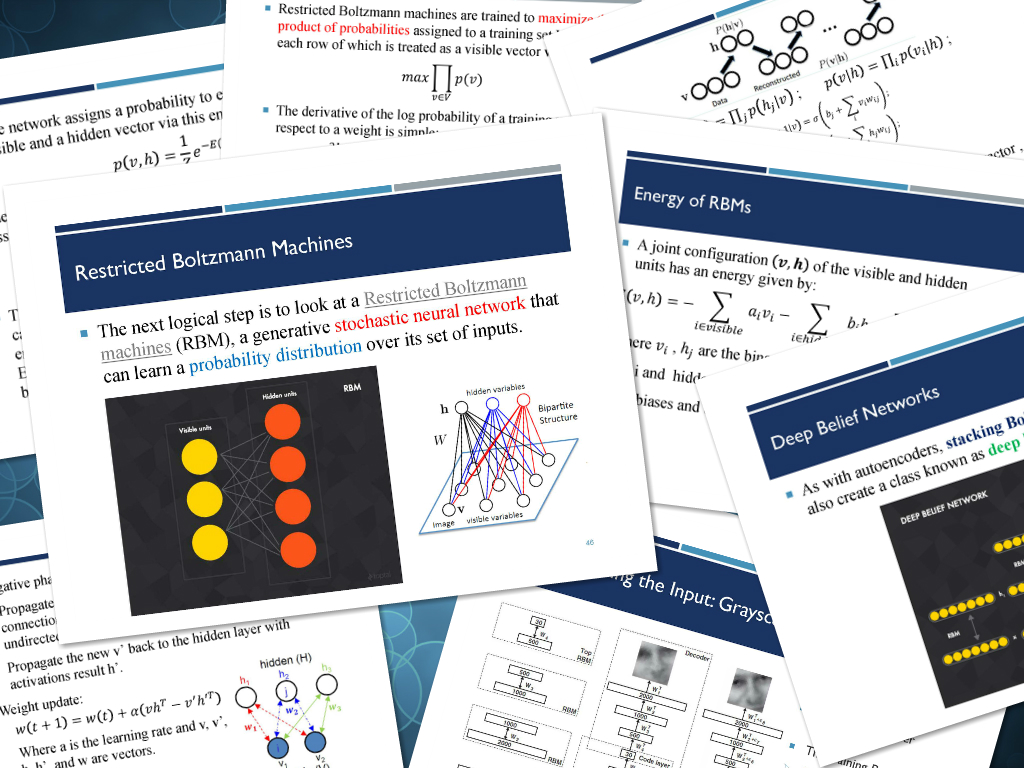

Recently, I have been working very hard in digging the Restricted Boltzmann Machine (RBM) of Deep Learning. Even I could do all the programming tasks, I still could not “deeply” and “fully connected” with the theory and the philosophy. Maybe, because I lack a solid statistic background.

The MCMC and Gibbs Sampling are very important parts of RBM and Deep Belief Networks, it is important for every CS student to master these skills.

In the famous Science paper “Reducing the Dimensionality of Data with Neural Networks”, Hinton and Salakhutdinov used the RBM to initialize the weights of the deep neural networks (as known as deep autoencoders), which eventually led to very surprising results. Later on, with the Contrastive Divergence (CD-k) method, the RBMs could be trained faster for the Deep Belief Networks and other frameworks. Nowadays, after 10 years’ developments and research, RBM has been used in various ways to build models.

Abstract: High-dimensional data can be converted to low-dimensional codes by training a multilayer neural network with a small central layer to reconstruct high-dimensional input vectors. Gradient descent can be used for fine-tuning the weights in such “autoencoder” networks, but this works well only if the initial weights are close to a good solution. We describe an effective way of initializing the weights that allows deep autoencoder networks to learn low-dimensional codes that work much better than principal components analysis as a tool to reduce the dimensionality of data.

Here, I list some questions for future discussion:

- How to decide the number of hidden nodes in each RBM layer?

- What is the potential damage when Gibbs sampling and Markov Chain are not strictly followed in practice, for example, we usually only apply CD-1?

- When could I know the RBM converge to stationarity? in other words, how many iterations do I need?

- When evaluating RBM, why it is OK to use the reconstruction error instead of the log-likelihood?

- Is RBM Learning features or Learning Data Distributions?

Leave a comment