Latest

-

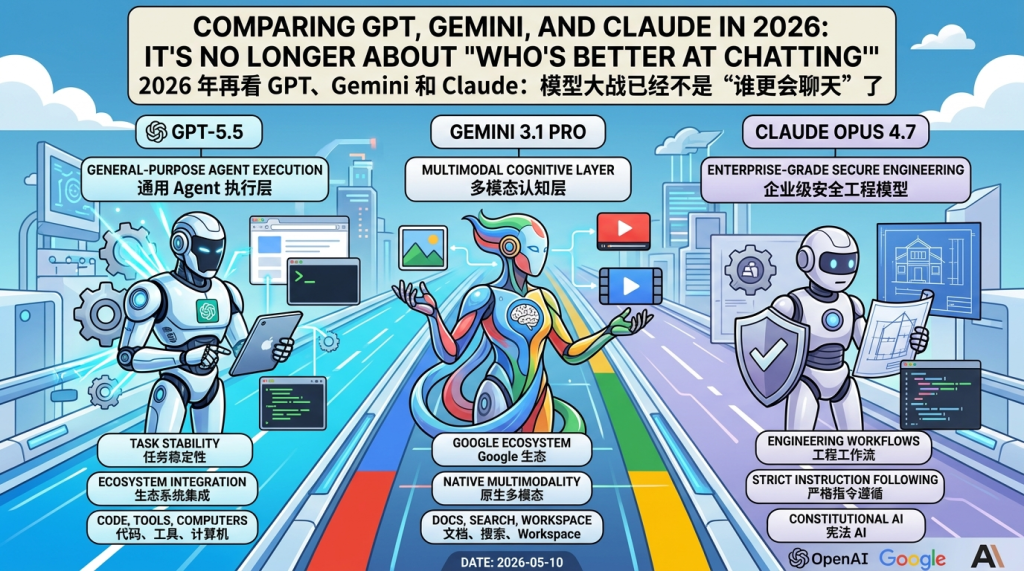

Comparing GPT, Gemini, and Claude in 2026: It’s No Longer About ‘Who’s Better at Chatting’

中文版本 If you’re still comparing ChatGPT, Gemini, and Claude as three chatbots, the AI world of 2026 probably looks a bit strange to you. Today’s real competition is no longer about “who answers more like a human” or “who memorizes more knowledge.” What OpenAI GPT-5.5, Google Gemini 3.1 Pro, and Anthropic Claude Opus 4.7 represent…