Understand this dilemma will help you to see that it is very hard to align moral algorithms with human values.

The original paper could be found at Science: The social dilemma of autonomous vehicles.

Abstruct: Autonomous vehicles (AVs) should reduce traffic accidents, but they will sometimes have to choose between two evils, such as running over pedestrians or sacrificing themselves and their passenger to save the pedestrians. Defining the algorithms that will help AVs make these moral decisions is a formidable challenge …

It has been well-known that autonomous vehicles (AVs) will change the world in the future. The AVs have the potential to benefit the world by increasing traffic efficiency, reducing pollution and eliminating up to 90% traffic accidents.

The problem is that not all the crashes could be avoided, some crashes will require the AVs to make difficult ethical decisions in cases that involve unavoidable harm.

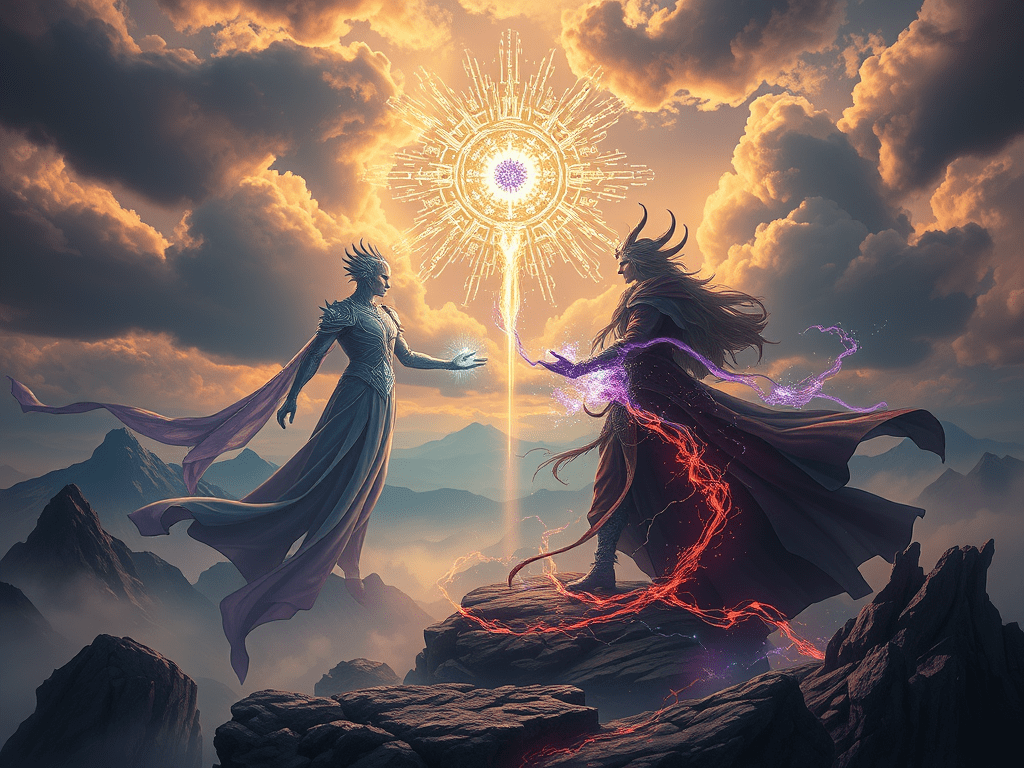

In the following figure, we see three scenarios just like what we worried.

The AV may avoid harming several pedestrians by swerving and sacrificing a passerby (Fig1A), or the AV may be faced with the choice of sacrificing its own passenger to save one or more pedestrians (Fig1BC).

Even these scenarios may never arise, the AV programming must still include decision rules about what to do in such a hypothetical situation.

Thus, the algorithm that controls the AV needs to embed moral principles guiding their decisions in situations of unavoidable harm.

Manufacturers and regulators will need to accomplish three potentially incompatible objectives: being consistent, not causing public outrage, and not discouraging buyers.

Hope this problem will be solved in the future.

Leave a comment