2021-Jan-31: The git repo has been upgraded from PyTorch-0.3.0 to PyTorch-1.7.0. with Python=3.8.3.

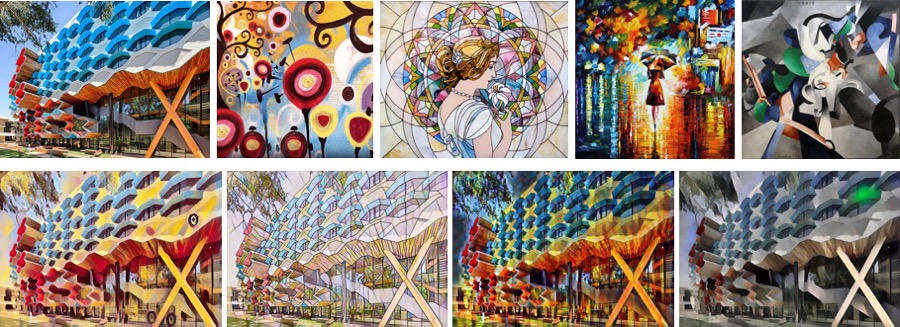

Continue my last post Image Style Transfer Using ConvNets by TensorFlow (Windows), this article will introduce the Fast Neural Style Transfer by PyTorch on MacOS.

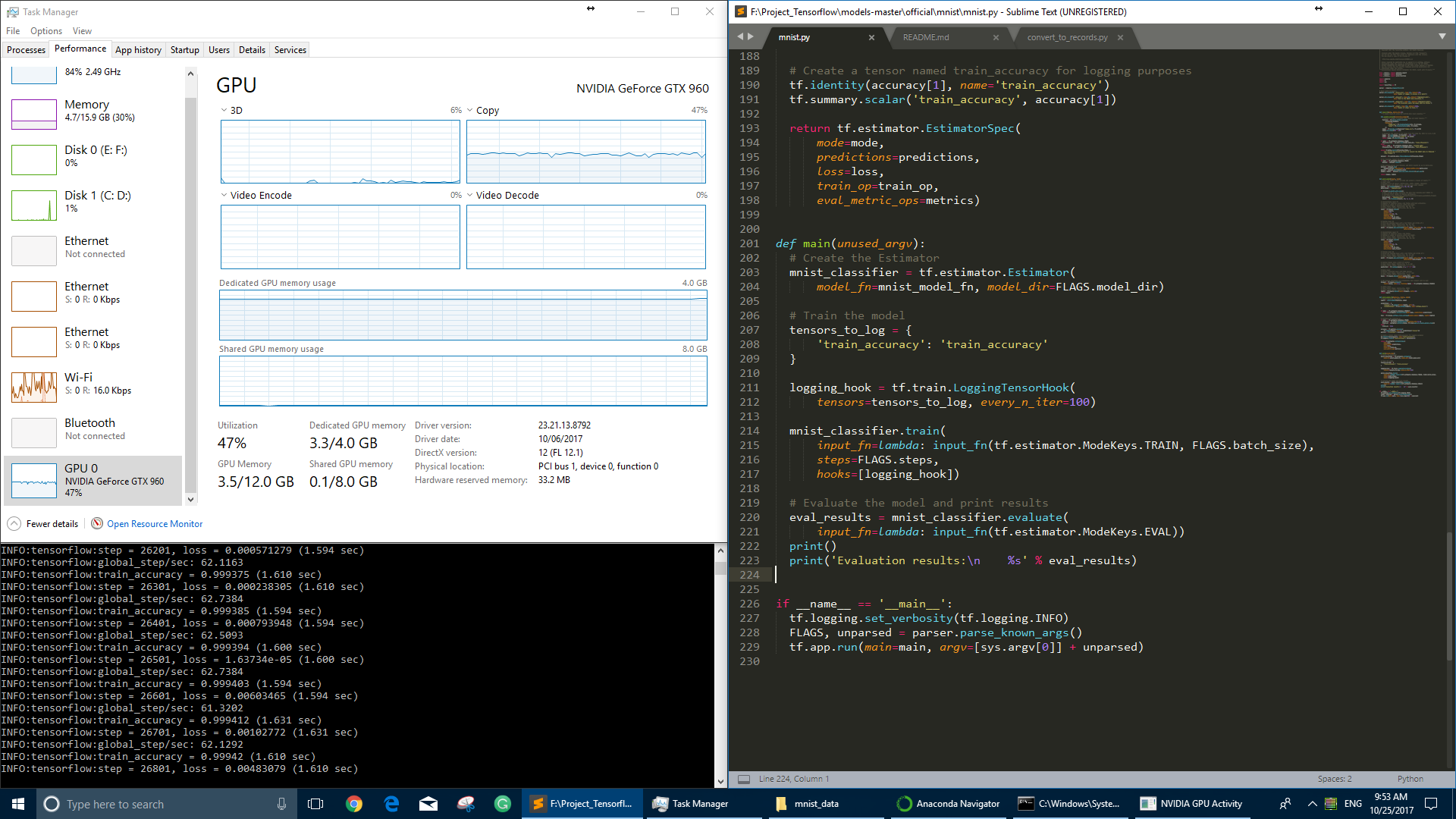

The original program is written in Python, and uses [PyTorch], [SciPy]. A GPU is not necessary but can provide a significant speedup especially for training a new model. Regular sized images can be styled on a laptop or desktop using saved models.

More details about the algorithm could be found in the following papers:

- Perceptual Losses for Real-Time Style Transfer and Super-Resolution (2016).

- Instance Normalization: The Missing Ingredient for Fast Stylization (2017).

If you could not download the papers, here are the Papers.

You can find all the source code and images (updated in 2021) at my GitHub: fast_neural_style .

Continue reading “Fast Neural Style Transfer by PyTorch (Mac OS)”